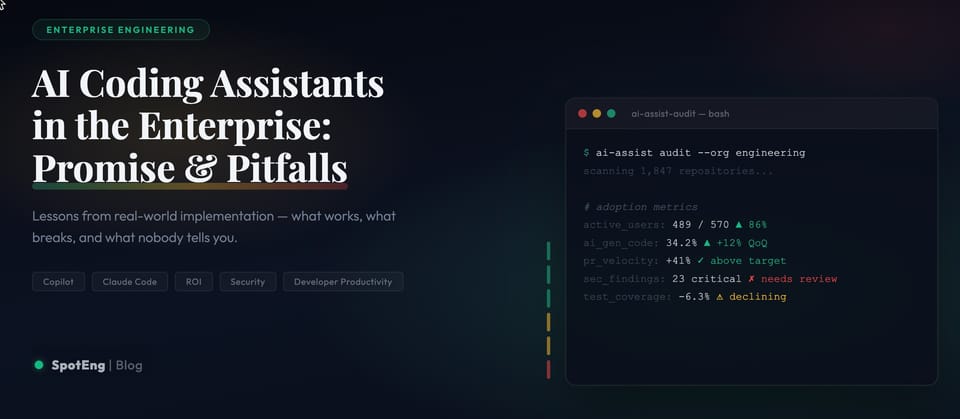

AI Coding Assistants in the Enterprise: Promise and Pitfalls from Real-World Implementation

In a comprehensive new study published in January 2025, where I was also one of the researchers, some valuable insights rose from deploying an AI coding assistant across the engineering organization of 400+ developers. This extensive enterprise deployment offers crucial lessons for organizations considering similar implementations.

Is AI a Really Game Changer? The Reality Beyond the Hype

The data presents a more nuanced picture than typical marketing claims would suggest:

The Promise

- 90% of developers reported reduced task completion time (median 20% reduction)

- 63% completed more tasks per sprint

- 72% overall satisfaction rate

- 33% acceptance rate for code suggestions

- Particularly effective for unit test generation and boilerplate code

- Significant impact on developer satisfaction and perceived productivity

- Strong performance in routine coding tasks and documentation

- Valuable assistance in learning new technologies and frameworks

The Concerns

- A competing study by Uplevel found a 41% higher bug rate among developers using AI assistance

- While developers report completing more tasks, issue throughput remained consistent

- Quality concerns with domain-specific and complex code

- Potential hidden costs in code review and bug fixing

- Risk of over-reliance on AI suggestions

- Challenges with context understanding in larger codebases

- Security implications requiring additional review processes

- Inconsistent performance across different programming languages and tasks

Detailed Deployment Journey

The organization's methodical four-phase approach provides a blueprint for successful implementation:

Phase 1: Initial Assessment (July 2023)

- 5 engineers selected for initial testing

- Focus on core functionality and integration

- Evaluation of security implications

- Assessment of impact on existing workflows

- Collection of baseline metrics

Phase 2: Trial Recruitment

- Expanded to 126 engineers (32% of development team)

- Stratified selection across different specializations

- Geographic distribution consideration

- Various experience levels included

- Comprehensive training program implemented

Phase 3: Two-Week Trial (August 2023)

- Structured feedback collection

- Quantitative metrics tracking

- Security compliance monitoring

- Performance impact assessment

- Integration with existing tools evaluation

Phase 4: Full Rollout (September 2023)

- Gradual organization-wide deployment

- Continuous monitoring and adjustment

- Regular feedback collection

- Ongoing training and support

- Policy refinement based on emerging patterns

Detailed Analysis of Key Findings

Language-Specific Performance

- TypeScript, Java, Python, and JavaScript showed consistent ~30% acceptance rates

- Lower acceptance rates for HTML, CSS, JSON, and SQL

- Variation in suggestion quality across different coding contexts

- Performance differences between simple and complex code structures

- Impact of codebase size on suggestion relevance

Usage Patterns and Developer Behavior

- Higher acceptance rates during routine coding tasks

- Increased usage in documentation and testing

- Variable utilization across different development phases

- Impact on code review processes

- Changes in development workflow patterns

Long-term Impact Assessment

- Initial productivity gains versus maintenance costs

- Effect on code quality over time

- Impact on team collaboration

- Changes in development practices

- Evolution of coding standards

Comprehensive Quality Assurance Strategy

Organizations must implement a multi-layered approach to quality control:

Code Review Enhancement

- Additional review stages for AI-generated code

- Automated quality checks implementation

- PR flagging system for AI contributions

- Peer review guidelines specific to AI-generated code

- Integration with existing quality processes

Risk Management

- Security vulnerability assessment

- Performance impact monitoring

- Technical debt tracking

- Compliance verification

- Regular security audits

Performance Metrics

- Bug rate tracking by code origin

- Development velocity measurement

- Code quality metrics monitoring

- Team productivity assessment

- Long-term maintenance cost analysis

Future Considerations and Industry Impact

The evolution of AI coding assistants will likely bring:

- Improved context understanding

- Better domain-specific knowledge

- Enhanced security features

- More sophisticated suggestion algorithms

- Deeper integration with development workflows

Emerging Best Practices

- Balanced approach to AI tool adoption

- Focus on developer education

- Regular policy updates

- Continuous performance monitoring

- Adaptive implementation strategies

Conclusion

The study reveals that while AI coding assistants offer significant potential benefits, successful implementation requires careful planning, robust governance, and ongoing monitoring. Organizations must balance the promise of increased productivity against potential quality risks, implementing appropriate guardrails while maintaining flexibility for future advancements in the technology.

The key to success lies in treating AI coding assistants not as a silver bullet, but as one component in a comprehensive development strategy. Organizations that approach implementation with careful consideration of both benefits and risks, while maintaining strong quality control measures, are most likely to achieve positive outcomes.

This expanded analysis provides organizations with a more detailed framework for evaluating and implementing AI coding assistants, emphasizing the importance of a balanced, strategic approach to adoption.

Comments ()